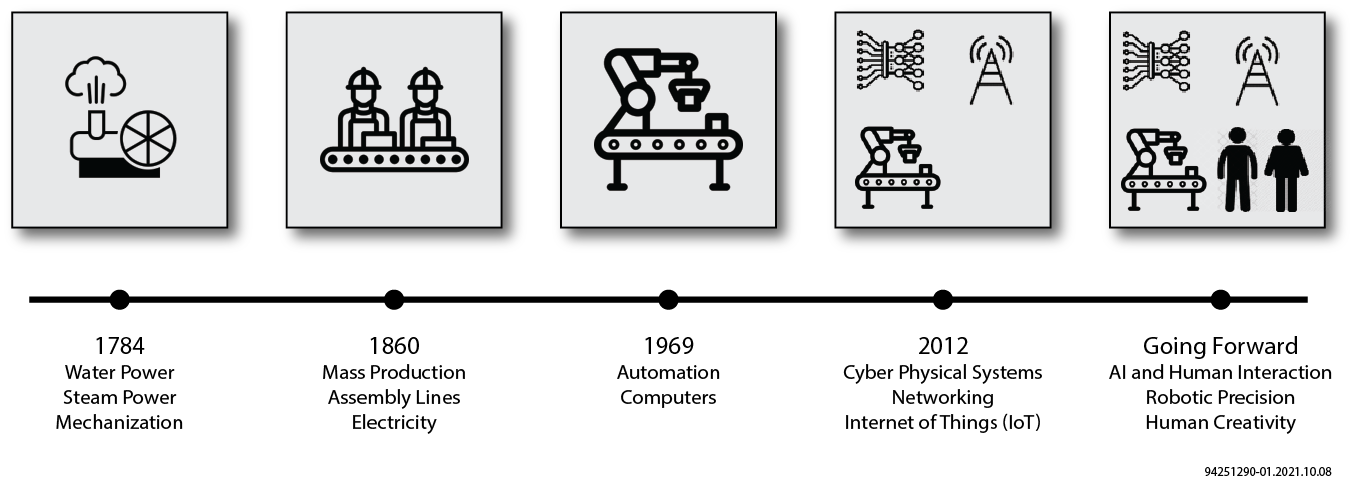

Over the last three hundred years, industry has evolved from human manufacturing lines to fully automated, computer-networked and artificial intelligence (AI)-driven, high- volume manufacturing environments. Today we see Industry 4.0 supported by 4G/5G and WiFi 5/6 wireless technologies, AI, robotics and complete automation. Industry 5.0 is bringing us full circle by combining the precision and efficiency of robotic systems, driven largely by AI, with the ingenuity and real-time thought of the human mind — all leading to more optimal manufacturing environments.

Evolution of Industry

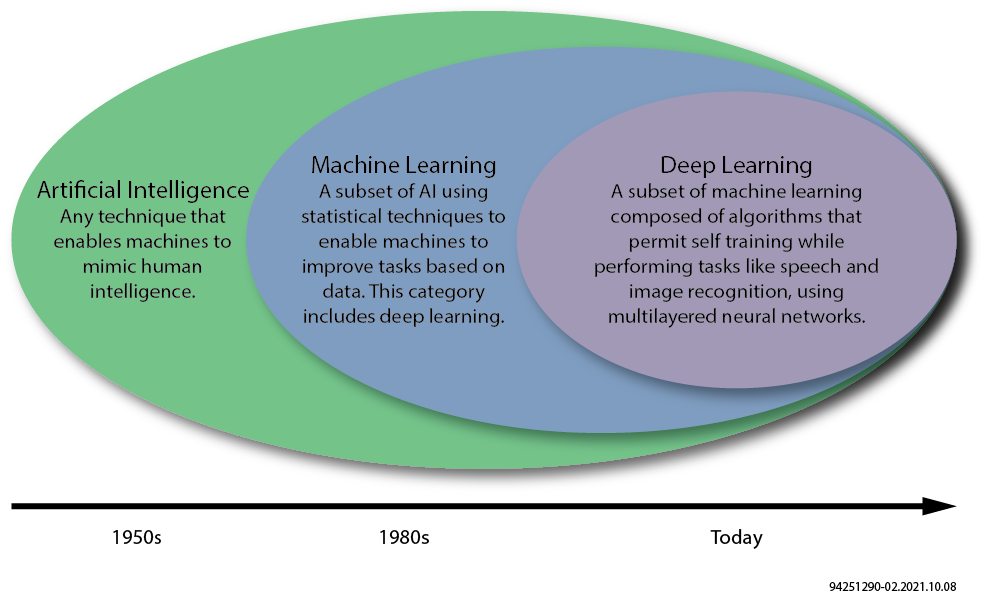

AI became relevant in the manufacturing environment as Industry 4.0 evolved in the early 2010s. Many applications leverage AI today, streamlining both manufacturing and business operations, processes, security and supply chain. Using predictive algorithms, AI can monitor equipment optimizing maintenance schedules and ultimately predict mechanical failure. Management of supply chain for materials associated with the manufacturing will also leverage predictive algorithms keeping lines up and running more efficiently. Considering both past and present business demand, AI algorithms can aid in the forecasting of future business. These AI systems can be tied to the supply chain systems and inventory systems which leads to faster time to revenue, minimizing overhead costs.

Robotics have been an essential component as early as Industry 3.0. As we move into Industry 5.0, these robotic systems will need to have adaptive AI algorithms, most certainly deep-learning (DL) algorithms, which are capable of interacting with human creativity and feedback. The ability to adapt in real time with minimal latency will be very important.

AI/ML/DL Spectrum

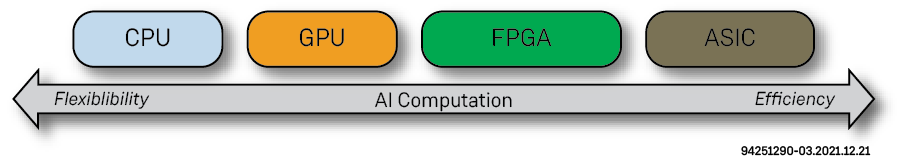

One of the fastest growing markets in the semiconductor world is data acceleration. There are three competing approaches for data acceleration — the GPU, the FPGA and custom ASIC. CPUs will always have the highest flexibility but with a tradeoff of power, performance and cost versus dedicated data accelerators. That leaves us with GPUs, ASICs and FPGAs. ASICs will certainly deliver the highest efficiency and performance; however, ASICs are fixed function and do not provide the needed flexibility to adapt to changing AI algorithms, specifications, newer technologies, vendor-specific requirements and workload optimizations. GPUs, which have been the traditional workhorse in the core data center, are limited to pure computational use cases, excluding in most cases, the ability to accelerate networking and storage, and do so at the expense of power and cost.

In contrast, FPGAs are capable of network, compute and storage acceleration, having the speed of an ASIC and the needed flexibility to deliver optimal data acceleration in today's core and edge data centers. In addition to all of the data acceleration, FPGAs will play a critical role in sensor fusion and the consolidation of incoming data traffic laying the groundwork for the data consumption.

CPUs vs GPUs vs FPGAs vs ASICs

Achronix develops FPGA-based data acceleration products for AI/ML compute, networking and storage applications. Unlike other high-performance FPGA vendors, Achronix offers both standalone Speedster7t FPGAs and Speedcore embedded FPGA IP. The combination of GDDR6 memory, machine learning processors (MLPs) and the revolutionary two-dimensional network on chip (2D NoC) lead to high-efficiency, high-density compute for ML and DL neural networking workloads.

For a more in-depth look, see Achronix FPGAs Optimize AI in Industry 4.0 and 5.0 (WP027).